Artificial intelligence has been a staple in computer science since the 1950s. Over the years, it has also made a lot of money for the businesses able to deploy it effectively. However, as we explained in a recent op-ed piece for the Wall Street Journal—which is a good starting point for the more detailed argument we make here—most of those gains have gone to large incumbent vendors (like Google or Meta) rather than to startups. Until very recently—with the advent of generative AI and all that it encompasses—we’ve not seen AI-first companies that seriously threaten the profits of their larger, established peers via direct competition or entirely new behaviors that make old ones obsolete.

With generative AI applications and foundation models (or frontier models), however, things look very different. Incredible performance and adoption, combined with a blistering pace of innovation, suggest we could be in the early days of a cycle that will transform our lives and economy at levels not seen since the microchip and the internet.

This post explores the economics of traditional AI and why it’s typically been difficult to reach escape velocity for startups using AI as a core differentiator (something we’ve written about in the past). It then covers why generative AI applications and large foundation-model companies look very different, and what that may mean for our industry.

Capability != economics

The issue with AI historically is not that it doesn’t work—it has long produced mind-bending results—but rather that it’s been resistant to building attractive pure-play business models in private markets. Looking at the fundamentals, it’s not hard to see why getting great economics from AI has been tough for startups.

The tail is long

Many AI products need to ensure they provide high accuracy even in rare situations, often referred to as “the tail.” And often while any given situation may be rare on its own, there tend to be a lot of rare situations in aggregate. This matters because as instances get rarer, the level of investment needed to handle them can skyrocket. These can be perverse economies of scale for startups to rationalize.

For example, it may take an investment of $20 million to build a robot that can pick cherries with 80% accuracy, but the required investment could balloon to $200 million if you need 90% accuracy. Getting to 95% accuracy might take $1 billion. Not only is that a ton of upfront investment to get adequate levels of accuracy without relying too much on humans (otherwise, what is the point?), but it also results in diminishing marginal returns on capital invested. In addition to the sheer amount of dollars that may be required to hit and maintain the desired level of accuracy, the escalating cost of progress can serve as an anti-moat for leaders—they burn cash on R&D while fast-followers build on their learnings and close the gap for a fraction of the cost.

Correctness matters

Many of the traditional AI problem domains aren’t particularly tolerant of wrong answers. For example, customer success bots should never offer bad guidance, optical character recognition (OCR) for check deposits should never misread bank accounts, and (of course) autonomous vehicles shouldn’t do any number of illegal or dangerous things. Although AI has proven to be more accurate than humans for some well-defined tasks, humans often perform better for long-tail problems where context matters. Thus, AI-powered solutions often still use humans in the loop to ensure accuracy, a situation that can be difficult to scale and often becomes a burdensome cost that weighs on gross margins.

Human brains and bodies are cheap and awesome at certain things

The human body and brain comprise an analog machine that’s evolved over hundreds of millions of years to navigate the physical world. It consumes roughly 150 watts of energy, it runs on a bowl of porridge, it’s quite good at tackling problems in the tail, and the global average wage is roughly $5 an hour. For some tasks in some parts of the world, the average wage is less than a dollar a day.

For many applications, AI is not competing with a traditional computer program, but with a human. And when the job involves one of the more fundamental capabilities of carbon life, such as perception, humans are often cheaper. Or, at least, it’s far cheaper to get reasonable accuracy with a relatively small investment by using people. This is particularly true for startups, which typically don’t have a large, sophisticated AI infrastructure to build from.

It’s also worth noting that AI is often held to a higher goalpost than simply what humans can achieve (why change the system if the new one isn’t significantly better?). So, even in cases where AI is obviously better, it’s still at a disadvantage.

Lack of new emergent user behavior

This is a very important, yet underappreciated, point. Likely as a result of AI largely being a complement to existing products from incumbents, it has not introduced many new use cases that have translated into new user behaviors across the broader consumer population. New user behaviors tend to underlie massive market shifts because they often start as fringe secular movements the incumbents don’t understand, or don’t care about. (Think about the personal microcomputer, the Internet, personal smartphones, or the cloud.) This is fertile ground for startups to cater to emergent consumer needs without having to compete against entrenched incumbents in their core areas of focus.

There are exceptions, of course, such as the new behaviors introduced by home voice assistants. But even these underscore how dominant the incumbents are in AI products, given the noticeable lack of widely adopted independents in this space.

Autonomous vehicles as a microcosm of AI challenges

Autonomous vehicles (AVs) are an extreme but illustrative example of why AI is hard for startups. AVs require tail correctness (getting things wrong is very, very bad); operational AV systems often rely on a lot of human oversight; and they compete with the human brain at perception (which runs at about 12 watts vs. some high-end CPU/GPU AV setups that consume over 1,300 watts). So while there are many reasons to move to AVs, including safety, efficiency, and traffic management, the economics are still not quite there when compared to ride-sharing services, let alone just driving yourself. This is despite an estimated $75 billion having been invested in AV technology.

Of course, there are narrower use cases that are more compelling, such as trucking or well-defined campus routes. Also, the economics are getting better all the time and are likely to surpass humans soon. But considering the level of investment and time it’s taken to get us here, plus the ongoing operational complexity and risks, it’s little wonder why generalized AVs have largely become an endeavor of large public companies, whether via incubation or acquisition.

The dreaded AI mediocrity spiral in private markets

For the reasons we laid out above, the difficulty of creating a high-margin, high-growth business where AI is the core differentiator has resulted in a well-known slog for startups attempting to do so. This hypothetical from the Wall Street Journal piece nicely encapsulates it:

In order for the startup to have sufficient correctness early on, it hires humans to perform the function it hopes the AI will automate over time. Often, this is part of an escalation path where a first cut of the AI will handle 80% of the common use cases, and humans manage the tail.

Early investors tend to be more focused on growth than on margins, so in order to raise capital and keep the board happy, the company continues to hire people rather than invest in the automation—which is proving tricky anyway because of the aforementioned complications with the long tail. By the time the company is ready for growth-level investment, it has already built out an entire organization around hiring and operationalizing humans in the loop, and it’s too difficult to unwind. The result is a business that can show relatively high initial growth, but maintains a low margin and, over time, becomes difficult to scale.

The AI mediocrity spiral is not fatal, though, and you can indeed build sizable public companies from it. But the economics and scaling tend to lag software-centric products. Thus, we’ve historically not seen a wave of fast-growing AI startups that have had the momentum to destabilize the incumbents. Rather, they tend to steer toward the harder, grittier, more complex problems—or become services companies building bespoke solutions—because they have the people on hand to deal with those types of things.

With generative AI, however, this is all changing.

How generative AI and foundation models are different

Over the last couple of years, we’ve seen a new wave of AI applications built on top of or incorporating large foundation models. This trend is commonly referred to as generative AI, because the models are used to generate content (image, text, audio, etc.), or simply as large foundation models, because the underlying technologies can be adapted to tasks beyond just content generation. For the purposes of this post, we’ll refer to it all as generative AI.

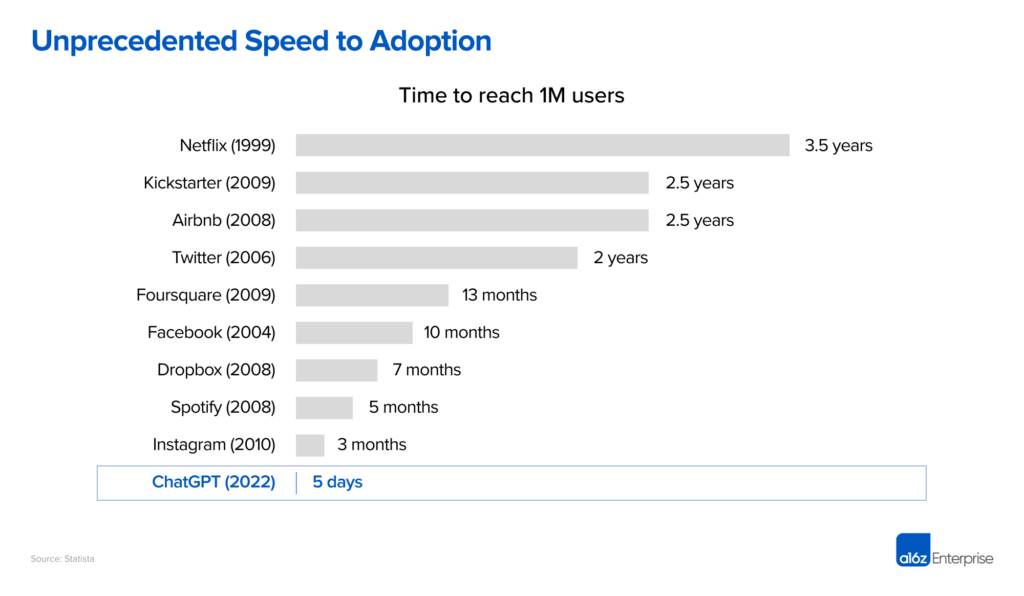

Given the long history of AI, it’s easy to brush this off as yet another hype cycle that will eventually cool. This time, however, AI companies have demonstrated unprecedented consumer interest and speed to adoption. Since entering the zeitgeist in mid to late-2022, generative AI has already produced some of the fastest-growing companies, products, and projects we’ve seen in the history of the technology industry. Case in point: ChatGPT took only 5 days to reach 1 million users, leaving some of the world’s most iconic consumer companies in the dust (Threads from Meta recently reached 1 million in a few hours, but it was bootstrapped from an existing social graph, so we don’t view that as an apples-to-apples comparison).

What’s even more compelling than the rapid early growth is its sustained nature and scale beyond the novelty of the product’s initial launch. In the 6 months since its launch, ChatGPT reached an estimated 230-million-plus worldwide monthly active users (MAUs) per Yipit. It took Facebook until 2009 to achieve a comparable 197 million MAUs—more than 5 years after its initial launch to the Ivy League and 3 years after the social network became available to the general public.

While ChatGPT is a clear AI juggernaut, it is by no means the only generative AI success story:

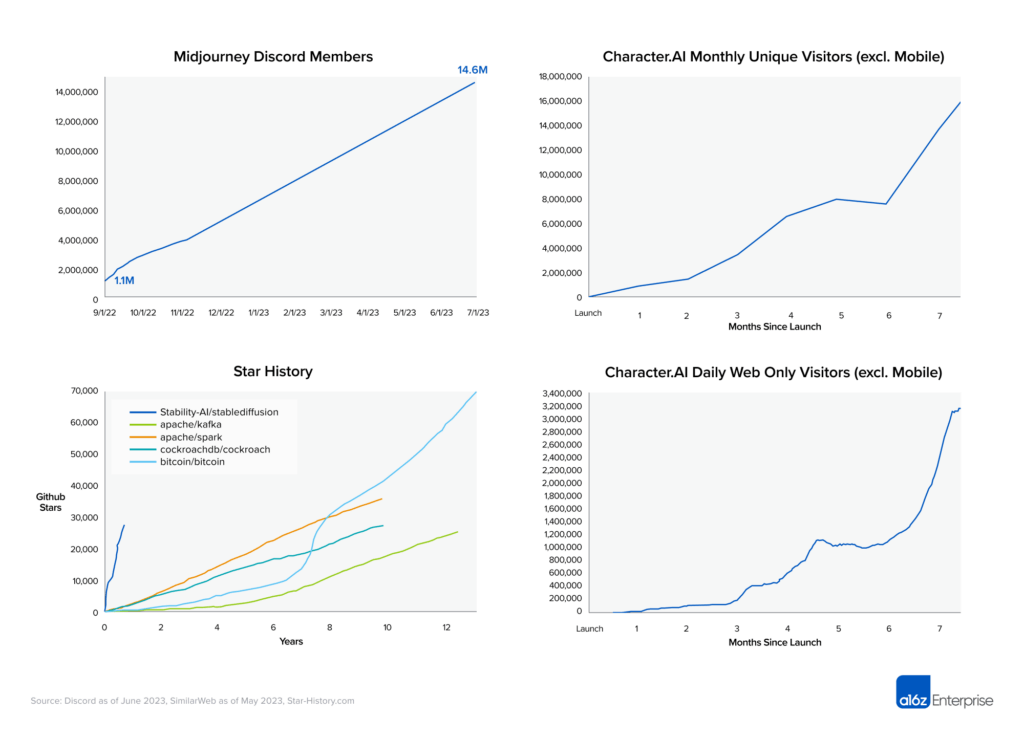

- Midjourney, a text-to-image AI company, saw its Discord server balloon to nearly 15 million members in June 2023, less than a year after launching its open beta in July 2022, making it the largest server on Discord.

- Character.AI, a vertically integrated AI companion provider, hit an estimated 18 million monthly active unique web visitors and more than 3 million daily active web users (per SimilarWeb) just 9 months after launch, excluding a successful mobile launch in May. The user base growth is particularly impressive in light of the engagement per user—active users (defined as users who send at least 1 message on the platform) average over 2 hours on the platform daily.

- Newer startups, such as chatbot company Janitor AI, have self-reported achieving over 1 million users within a few weeks of launch.

The AI developer market is also seeing tremendous growth. For example, the release of the large image model Stable Diffusion blew away some of the most successful open-source developer projects in recent history with regard to speed and prevalence of adoption. Meta’s Llama 2 large language model (LLM) attracted many hundreds of thousands of users, via platforms such as Replicate, within days of its release in July.

These unprecedented levels of adoption are a big reason why we believe there’s a very strong argument that generative AI is not only economically viable, but that it can fuel levels of market transformation on par with the microchip and the Internet.

To understand why this is the case, it’s worth looking at how generative AI is different from previous attempts to commercialize AI.

Correctness is overrated

Many of the use cases for generative AI are not within domains that have a formal notion of correctness. In fact, the two most common use cases currently are creative generation of content (images, stories, etc.) and companionship (virtual friend, coworker, brainstorming partner, etc.). In these contexts, being correct simply means “appealing to or engaging the user.” Further, other popular use cases, like helping developers write software through code generation, tend to be iterative, wherein the user is effectively the human in the loop also providing the feedback to improve the answers generated. They can guide the model toward the answer they’re seeking, rather than requiring the company to shoulder a pool of humans to ensure immediate correctness.

Applicable to a broad set of markets

Generative AI models are incredibly general and already are being applied to a broad variety of large markets. This includes images, videos, music, games, and chat. The games and movie industries alone are worth more than $300 billion. Further, the LLMs really do understand natural language, and therefore are being pushed into service as a new consumption layer for programs. We’re also seeing broad adoption in areas of professional pairwise interaction such as therapy, legal, education, programming, and coaching.

This all said, existing markets are only a proof point of value, and perhaps merely a launch point for generative AI. Historically, when economics and capabilities shift this dramatically, as was the case with the Internet, we see the emergence of entirely new behaviors and markets that are both impossible to predict and much larger than what preceded them.

Far better than humans at high-value tasks

Historically, much effort in AI has focused on replicating tasks that are easy for humans, such as object identification or navigating the physical world—essentially, things that involve perception. However, these tasks are easy for humans because the brain has evolved over hundreds of millions of years, optimizing specifically for them (picking berries, evading lions, etc.). Therefore as we discussed above, getting the economics to work relative to a human is hard.

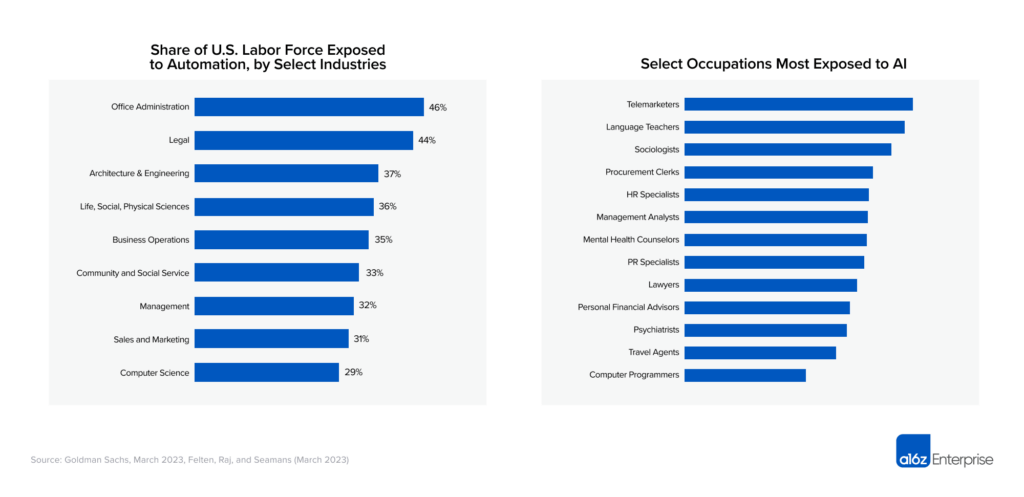

Generative AI, on the other hand, automates natural language processing and content creation—tasks the human brain has spent far less time evolving toward (arguably less than 100,000 years). Generative AI can already perform many of these tasks orders-of-magnitude cheaper, faster, and, in some cases, better than humans. Because these language-based or “creative” tasks are harder for humans and often require more sophistication, such white-collar jobs (for example, programmers, lawyers, and therapists) tend to demand higher wages.

So while an agricultural worker in the U.S. gets on average $15 an hour, white-collar workers in the roles mentioned above are paid hundreds of dollars an hour. However, while we don’t yet have robots with the fine motor skills necessary for picking strawberries economically, you’ll see when we break down the costs that generative AI can perform similarly to these high-value workers at a fraction of the cost and time.

All sorts of new user behavior

The new user behaviors that have emerged with the generative AI wave are as startling as the economics have been. LLMs have been pulled into service as software development partners, brainstorming companions, educators, life coaches, friends, and yes, even lovers. Large image models have become central to new communities built entirely around the creation of fanciful new content, or the development of AI art therapy to help treat use cases such as mental health issues. These are functions that computers have not, to date, been able to fulfill, so we don’t really have a good understanding of what the behavior will lead to, nor what are the best products to fulfill them. This all means opportunity for the new class of private generative AI companies that are emerging.

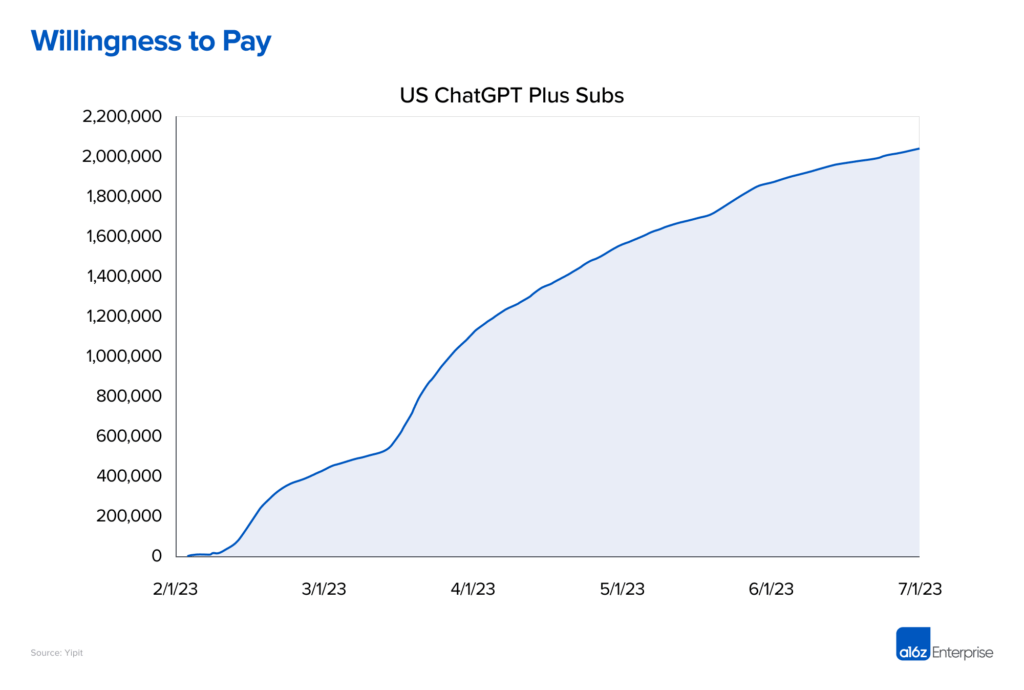

Although the use cases for this new behavior are still emerging or being created, users—critically—have already shown a willingness to pay. Many of the new generative AI companies have shown tremendous revenue growth in addition to the aforementioned user growth. Subscriber estimates for ChatGPT imply close to $500 million in annualized run-rate revenue from U.S. subscribers alone. ChatGPT aside, companies across a number of industries (including legal, copywriting, image generation, and AI companionship, to name a few) have achieved impressive and rapid revenue scale—up to hundreds of millions of run-rate revenue within their first year. For a few companies who own and train their own models, this revenue growth has even outpaced heavy training costs, in addition to inference costs—that is, the variable costs to serve customers. This thus creates already or soon-to-be self-sustaining companies.

Just as the time to 1 million users has been truncated, so has the time it takes for many AI companies to hit $10-million-plus of run-rate revenue, often a fundraising hallmark for achieving product-market fit.

Let’s run the numbers

As a motivating example, let’s look at the simple task of creating an image. Currently, the image qualities produced by these models are on par with those produced by human artists and graphic designers, and we’re approaching photorealism. As of this writing, the compute cost to create an image using a large image model is roughly $.001 and it takes around 1 second. Doing a similar task with a designer or a photographer would cost hundreds of dollars (minimum) and many hours or days (accounting for work time, as well as schedules). Even if, for simplicity’s sake, we underestimate the cost to be $100 and the time to be 1 hour, generative AI is 100,000 times cheaper and 3,600 times faster than the human alternative.

A similar analysis can be applied to many other tasks. For example, the costs for an LLM to summarize and answer questions on a complex legal brief is fractions of a penny, while a lawyer would typically charge hundreds (and up to thousands) of dollars per hour and would take hours or days. The cost of an LLM therapist would also be pennies per session. And so on.

The occupations and industries impacted by the economics of AI expand well beyond the few examples listed above. We anticipate the economic value of generative AI to have a transformative and overwhelming impact on areas ranging from language education to business operations, and the magnitude of this impact to be positively correlated with the median wage of that industry. This will drive a bigger cost delta between the status quo and the AI alternative.

Of course, the LLMs would actually have to be good at these functions to realize that economic value. For this, the evidence is mounting: every day we gather more examples of generative AI being used effectively in practice for real tasks. They continue to improve at a startling place, and thus far are doing so without untenable increases in training costs or product pricing. We’re not suggesting that large models can or will replace all work of this sort—there is little indication of that at this point—just that the economics are stunning for every hour of work that they save.

None of this is scientific, mind you, but if you sketch out an idealized case where a model is used to perform an existing service, the numbers tend to be 3-4 orders of magnitude cheaper than the current status quo, and commonly 2-3 orders of magnitude faster.

An extreme example would be the creation of an entire video game from a single prompt. Today, companies create models for every aspect of a complex video game—3D models, voice, textures, music, images, characters, stories, etc.—and creating a AAA video game today can take hundreds of millions of dollars. The cost of inference for an AI model to generate all the assets needed in a game is a few cents or tens of cents. These are microchip- or Internet-level economics.

The third epoch of compute?

So, are we just fueling another hype bubble that fails to deliver? We don’t think so. Just like the microchip brought the marginal cost of compute to zero, and the Internet brought the marginal cost of distribution to zero, generative AI promises to bring the marginal cost of creation to zero.

Interestingly, the gains offered by the microchip and the Internet were also about 3-4 orders of magnitude. (These are all rough numbers primarily to illustrate a point. It’s a very complex topic, but we want to provide a rough sense of how disruptive the Internet and the microchip were to the current time and cost of doing things.) For example, ENIAC, the first general purpose programmable computer, was 5,000 times faster than any other calculation machine at the time, and purportedly could compute the trajectory of a missile in 30 seconds, compared with at least 30 hours by hand.

Similarly, the Internet dramatically changed the calculus for moving bits across great distances. Once an adequately sized Internet bandwidth arrived, you could download software in minutes rather than receiving it by mail in days or weeks, or driving to the local Fry’s to buy it in-person. Or consider the vast efficiencies of sending emails, streaming video, or using basically any cloud service. The cost per bit decades ago was around 2*10^-10, so if you were sending say 1 kilobyte, it was orders of magnitude cheaper than the price of a stamp.

For our dollar, generative AI holds a similar promise when it comes to the cost and time of generating content—everything from writing an email to producing an entire movie. Of course, all of this assumes that AI scaling continues and we continue to see massive gains in economics and capabilities. As of this writing, many of the experts we talk to believe we’re in the very early innings for the technology and we’re very likely to see tremendous continued progress for years to come.

What about defensibility?

There is a lot of to-do about the defensibility or lack of defensibility for AI companies. It’s an important conversation to have and, indeed, we’ve written about it. But when the economic benefits are as compelling as they are with generative AI, there is ample velocity to build a company around more traditional defensive moats such as scale, the network, the long tail of enterprise distribution, brand, etc. In fact, we’re already seeing seemingly defensible business models arise in the generative AI space around two-sided marketplaces between model creators and model users, and communities around creative content.

So even though there doesn’t seem to be obvious defensibility endemic to the tech stack (if anything, it looks like there remain perverse economics of scale), we don’t believe this will hamper the impending market shift.

Broadly, we believe that a drop in marginal value of creation will massively drive demand. Historically, in fact, the Jevons paradox consistently proves true: When the marginal cost of a good with elastic demand (e.g., compute or distribution) goes down, the demand more than increases to compensate. The result is more jobs, more economic expansion, and better goods for consumers. This was the case with the microchip and the Internet, and it’ll happen with generative AI, too.

If you’ve ever wanted to start a company, now is the time to do it. And please keep in touch along the way 🙂