Virtualization has been a key driver behind every major trend in software, from search to social networks to SaaS, over the past decade. In fact, most of the applications we use — and cloud computing as we know it today — would not have been possible without the server utilization and cost savings that resulted from virtualization.

But now, new cloud architectures are reimagining the entire data center. Virtualization as we know it can no longer keep up.

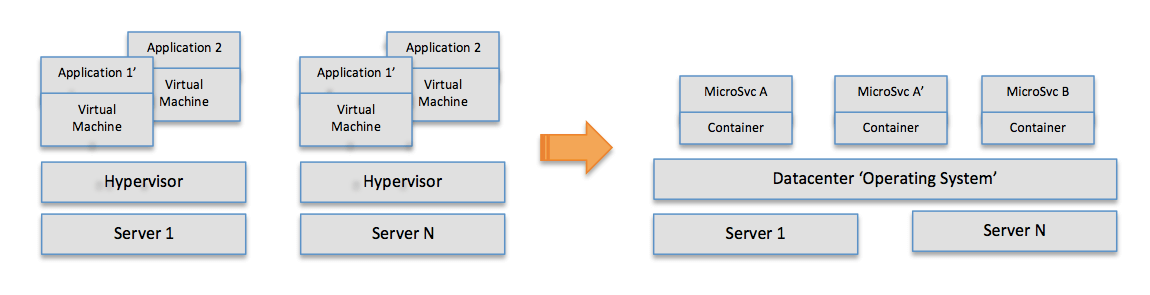

As data centers transform, the core insight behind virtualization — that of carving up a large, expensive server into several virtual machines — is being turned on its head. Instead of divvying the resources of individual servers, large numbers of servers are aggregated into a single warehouse-scale (though still virtual!) “computer” to run highly distributed applications.

Every IT organization and developer will be affected by these changes, especially as scaling demands increase and applications get more complex every day. How can companies that have already invested in the current paradigm of virtualization understand the shift? What’s driving it? And what happens next?

Virtualization then and now

Perhaps the best way to approach the changes happening now is in terms of the shifts that came before it — and the leading players behind each of them.

That story begins in the mainframe era, with IBM. Back in the 1960s and 1970s, the company needed a way to cleanly support older versions of its software on newer-generation hardware and to turn its powerful computers from a batch system that ran one program at a time to an interactive system that could support multiple users and applications. IBM engineers came up with the concept of a “virtual machine” as a way to carve up resources and essentially timeshare the system across applications and users while preserving compatibility.

This approach cemented IBM’s place as the market leader in mainframe computing.

Fast-forward to the early 2000s and a different problem was brewing. Enterprises were faced with data centers full of expensive servers that were running at very low utilization levels. Furthermore, thanks to Moore’s Law, processor clock speeds had doubled every 18 months and processors had moved to multiple cores — yet the software stack was unable to effectively utilize the newer processors and all those cores.

Again, the solution was a form of virtualization. VMware, then a startup out of Stanford, enabled enterprises to dramatically increase the utilization of their servers by allowing them to pack multiple applications into a single server box. By embracing all software (old and new), VMware also bridged the gap between the lagging software stack and modern, multicore processors. Finally, VMware enabled both Windows and Linux virtual machines to run on the same physical hosts — thereby removing the need to allocate separate physical servers to those clusters within the same data center.

Virtualization thus established a stranglehold in every enterprise data center.

But in the late 2000s, a quiet technology revolution got under way at companies like Google and Facebook. Faced with the unprecedented challenge of serving billions of users in real time, these Internet giants quickly realized they needed to build custom-tailored data centers with a hardware and software stack that aggregated (versus carved) thousands of servers and replaced larger, more expensive monolithic systems.

What these smaller and cheaper servers lacked in computing power they made up in number, and sophisticated software glued it all together to build a massively distributed computing infrastructure. The shape of the data center changed. It may have been made up of commodity parts, but the results were still orders of magnitude more powerful than traditional, state-of-the-art data centers. Linux became the operating system of choice for these hyperscale data centers, and as the field of devops emerged as a way to manage both development and operations, virtualization lost one of its core value propositions: the ability to simultaneously run different “guest” operating systems (that is, both Linux and Windows) on the same physical server.

Microservices as a key driver

But the most interesting changes driving the aggregation of virtualization are on the application side, through a new software design pattern known as microservices architecture. Instead of monolithic applications, we now have distributed applications composed of many smaller, independent processes that communicate with each other using language-agnostic protocols (HTTP/REST, AMQP). These services are small and highly decoupled, and they’re focused on doing a single small task.

Microservices quickly became the design pattern of choice for a few reasons:

First, microservices enable rapid cycle times. The old software development model of releasing an application once every few months was too slow for Internet companies, which needed to deploy new releases several times during a week — or even on a single day in response to engagement metrics or similar. Monolithic applications were clearly unsuitable for this kind of agility due to their high change costs.

Second, microservices allow selective scaling of application components. The scaling requirements for different components within an application are typically different, and microservices allowed Internet companies to scale only the functions that needed to be scaled. Scaling older monolithic applications, on the other hand, was tremendously inefficient. Often the only way was to clone the entire application.

Third, microservices support platform-agnostic development. Because microservices communicate across language-agnostic protocols, an application can be composed of microservices running on different platforms (Java, PHP, Ruby, Node, Go, Erlang, and so on) without any issue, thereby benefiting from the strengths of each individual platform. This was much more difficult (if not impractical) to implement in a monolithic application framework.

Delivering microservices

The promise of the microservices architecture would have remained unfulfilled in the world of virtual machines. To meet the demands of scaling and costs, microservices require both a light footprint and lightning-fast boot times, so hundreds of microservices can be run on a single physical machine and launched at a moment’s notice. Virtual machines lack both qualities.

That’s where Linux-based containers come in.

Both virtual machines and containers are means of isolating applications from hardware. However, unlike virtual machines — which virtualize the underlying hardware and contain an OS along with the application stack — containers virtualize only the operating system and contain only the application. As a result, containers have a very small footprint and can be launched in mere seconds. A physical machine can accommodate four to eight times more containers than VMs.

Containers aren’t actually new. They have existed since the days of FreeBSD Jails, Solaris Zones, OpenVZ, LXC, and so on. They’re taking off now, however, because they represent the best delivery mechanism for microservices. Looking ahead, every application of scale will be a distributed system consisting of tens if not hundreds of microservices, each running in its own container. For each such application, the ops platform will need to keep track of all of its constituent microservices — and launch or kill those as necessary to guarantee the application-level SLA.

Why we need a data center operating system

All data centers, whether public or private or hybrid, will soon adopt these hyperscale cloud architectures — that is, dumb commodity hardware glued together by smart software, containers, and microservices. This trend will bring to enterprise computing a whole new set of cloud economics and cloud scale, and it will introduce entirely new kinds of businesses that simply were not possible earlier.

What does this mean for virtualization?

Virtual machines aren’t dead. But they can’t keep up with the requirements of microservices and next-generation applications, which is why we need a new software layer that will do exactly the opposite of what server virtualization was designed to do: Aggregate (not carve up!) all the servers in a data center and present that aggregation as one giant supercomputer. Though this new level of abstraction makes an entire data center seem like a single computer, in reality the system is composed of millions of microservices within their own Linux-based containers — while delivering the benefits of multitenancy, isolation, and resource control across all those containers.

Think of this software layer as the “operating system” for the data center of the future, though the implications of it go beyond the hidden workings of the data center. The data center operating system will allow developers to more easily and safely build distributed applications without constraining themselves to the plumbing or limitations (or potential loss) of the machines, and without having to abandon their tools of choice. They will become more like users than operators.

This emerging smart software layer will soon free IT organizations — traditionally perceived as bottlenecks on innovation — from the immense burden of manually configuring and maintaining individual apps and machines, and allow them to focus on being agile and efficient. They too will become more strategic users than maintainers and operators.

The aggregation of virtualization is really an evolution of the core insight behind virtual machines in the first place. But it’s an important step toward a world where distributed computing is the norm, not the exception.

This article originally appeared in InfoWorld.