In today’s AI-driven world, the ability to train AI models locally and perform fast inference on GPUs at an optimal cost is more important than ever. Building your own GPU server with an RTX 4090 or RTX 5090 — like the one described here — enables a high-performance eight-GPU setup running on PCIe 5.0 with full x16 lanes. This configuration ensures maximum interconnect speed for all eight GPUs. In contrast, most similar setups are limited by the PCIe bus version (such as PCIe 4.0 or even lower), due to the challenges of running longer PCIe extensions.

Running models locally means no API calls to external services, no data leakage, and no usage throttles. Additionally, your data stays yours and there is no sharing logs with cloud providers or sending sensitive documents to external model providers. It’s perfect for research and for privacy-conscious developers.

With that in mind, we decided to build our own GPU server using readily available, and affordable, hardware. It certainly is not production-ready, but is more than capable as a platform. (Disclaimer: This project was developed solely for research and educational purposes.)

This guide will walk you through our process of building a highly efficient GPU server using NVIDIA’s GeForce RTX 4090s. We’ll be constructing two identical servers, each housing eight RTX 4090 GPUs and delivering an impressive amount of computational power in a relatively simple and cost-effective package. All GPUs will run on their full 16 lanes with PCIe 4.0. (Note: We have built and tested our servers exclusively with the GeForce RTX 4090. While we have not yet tested them with the RTX 5090, they should be compatible and are expected to run with PCIe 5.0.)

Why build this server?

In an era of rapidly evolving AI models and increasing reliance on cloud-based infrastructure, there’s a strong case for training and running models locally, especially for research, experimentation, and gaining hands-on experience in building a custom GPU-server setup.

The NVIDIA RTX series of GPUs presents a compelling option for this type of project, offering phenomenal performance at a competitive cost.

The RTX 4090 and RTX 5090 are absolute beasts. With 24GB of VRAM and 16,384 CUDA cores on the RTX 4090, and an expected 32GB of VRAM and 21,760 CUDA cores on the RTX 5090, both deliver exceptional FP16/BF16 and tensor performance — rivaling datacenter GPUs at a fraction of the cost. While enterprise-grade options like the H100 or H200 offer top-tier performance, they come with a hefty price tag. For less of the cost of a single H100, you could stack multiple (4-8) RTX 4090s or 5090s and still achieve serious throughput for inference and even training smaller models.

Building a small GPU server, particularly with powerhouse GPUs like the NVIDIA RTX 4090 or the new RTX 5090, provides exceptional flexibility, performance, and privacy for running large language models (LLMs) such as LLaMA, DeepSeek, and Mistral, as well as diffusion models, or even custom fine-tuned variants. Modern open-source models are designed with efficient inference in mind, often using Mixture of Experts (MoE) architectures, and the 4090s can easily handle those workloads. Depending on their parameter size, many of these models can also run as dense models on a small server like ours, without requiring quantization.

Want to build your own Copilot? A personal chatbot? A local RAG pipeline? Done.

Using libraries like vLLM, GGUF/llama.cpp, or even full PyTorch inference with DeepSpeed, you can take advantage of:

- Model parallelism

- Tensor or pipeline parallelism

- Quantization to reduce VRAM load

- Memory-efficient inference with paged attention or streaming

You’re in full control of how your GPU server is optimized, patched, and updated.

Sample setup

Before we dive into the build process, let’s discuss why this particular server configuration is worth considering:

- Simplicity: While building a high-performance GPU server might seem daunting, the parts we use and our adaptations are accessible to those with intermediate technical skills.

- PCIe 5.0 future-proofing: The server offers eight PCIe 5.0 x16 slots, providing maximum bandwidth and future-proofing for high-performance GPUs. While the RTX 4090 is limited to PCIe 4.0 speeds, this setup allows for seamless upgrades to next-generation PCIe 5.0 GPUs, such as the GeForce RTX 5090.

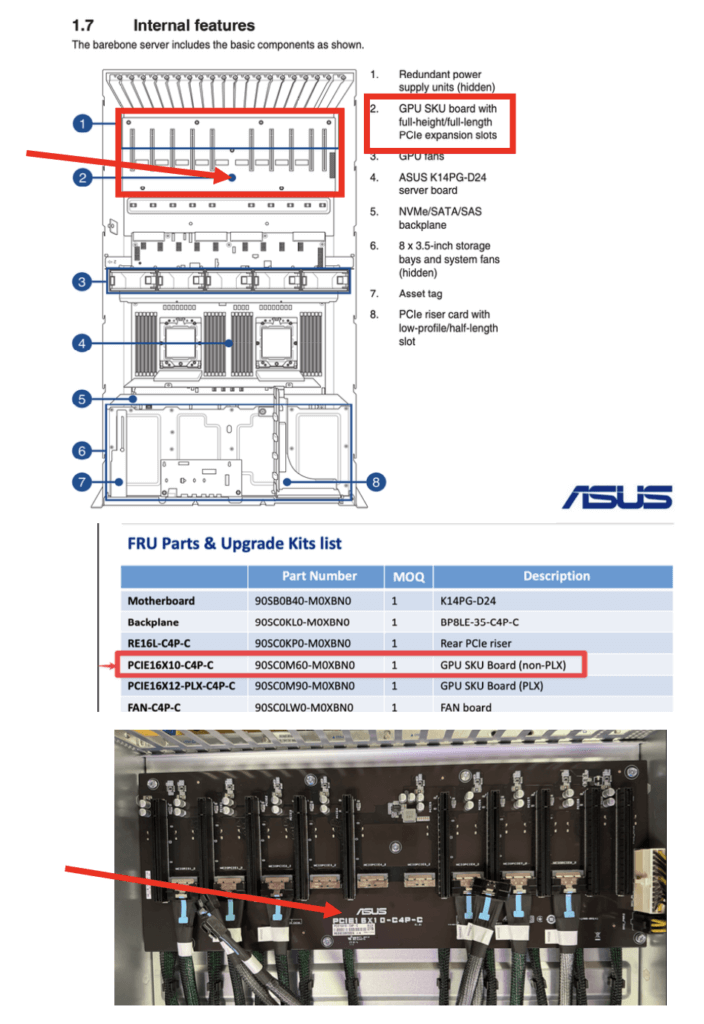

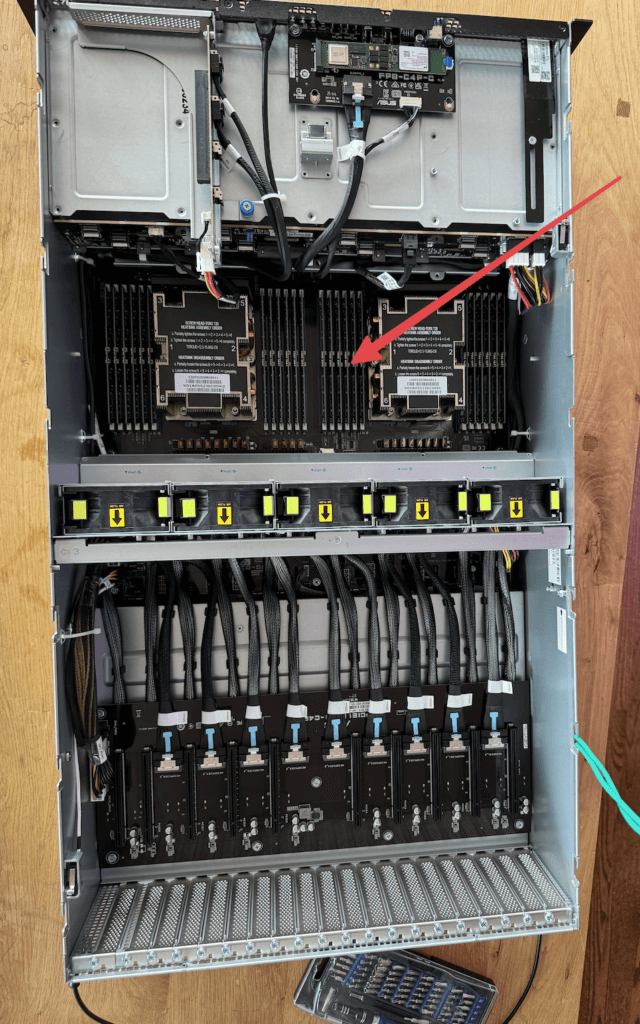

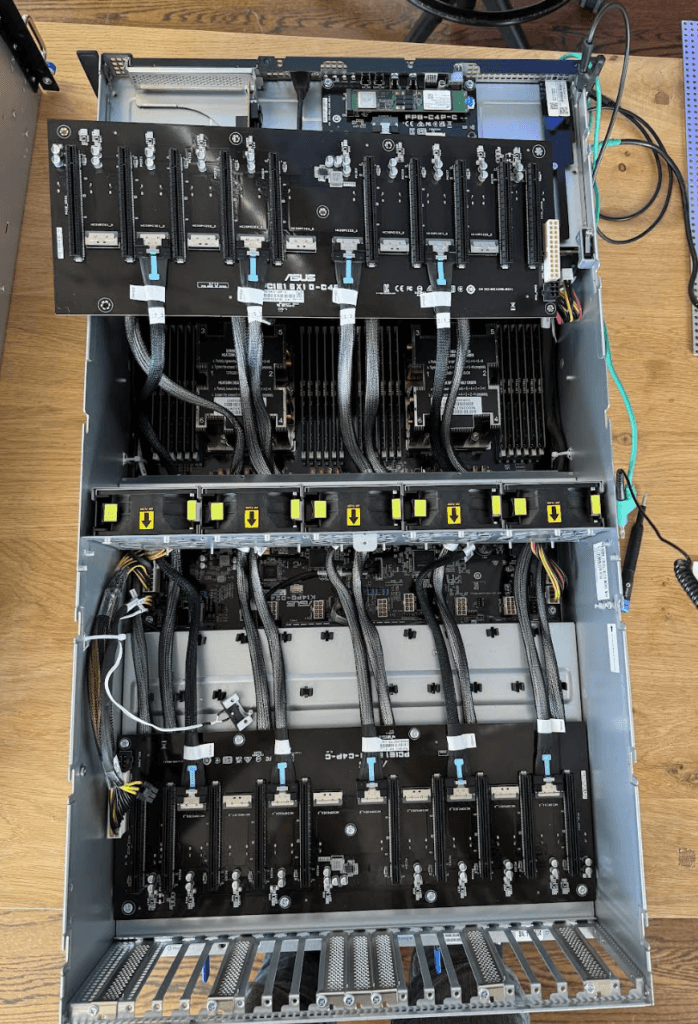

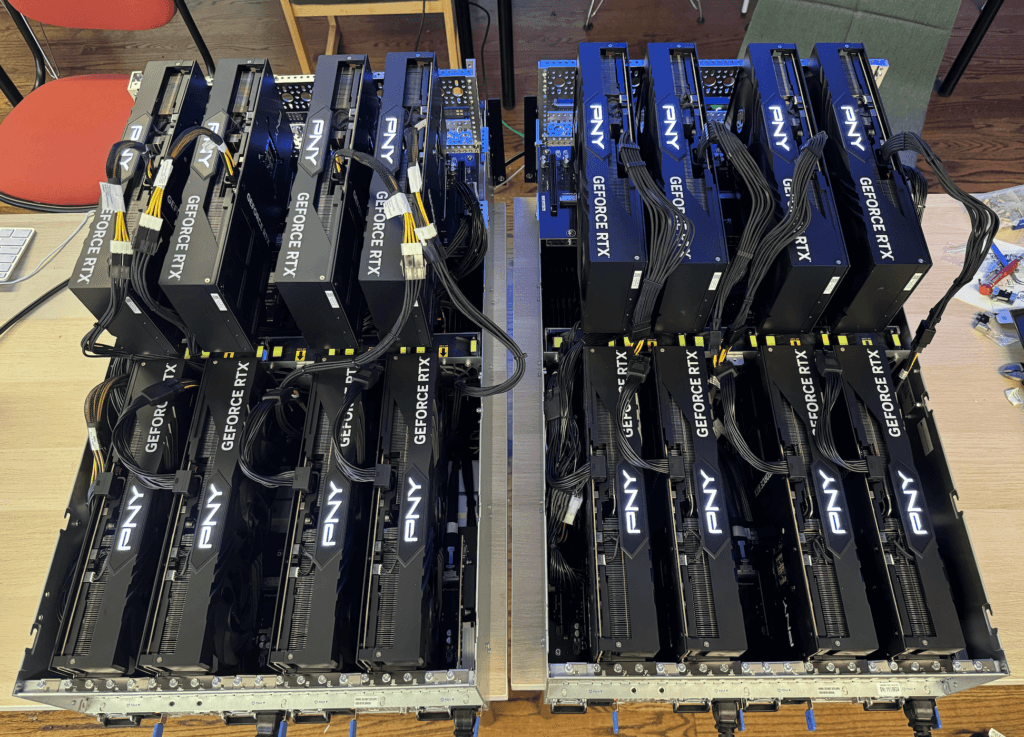

- PCIe board configuration for eight 3-slot GPUs (e.g., RTX 4090 or RTX 5090): In this setup, the PCIe board is separate from the main motherboard, a unique design that enables two independent PCIe 5.0 PCB boards to be mounted individually. This configuration accommodates all eight GPUs (four on the bottom and four on the top) without requiring additional complex and expensive PCIe retimers or redrivers. Typically, such components are needed to maintain signal integrity when PCIe signals travel across longer traces, cables, or connectors. By minimizing signal path length and complexity, this design ensures full-speed connectivity with greater simplicity and reliability.

- Superior to traditional server layouts: Many server alternatives that offer eight PCIe 5.0 x16 lanes have them directly integrated into the main motherboard. However, this layout makes it physically impossible to fit eight RTX 4090s, due to their 3-slot width. Our configuration solves this limitation by separating the PCIe boards from the motherboard, enabling full support for eight triple-slot GPUs without compromise, with a custom aluminum frame built to hold four external GPUs.

- Direct PCIe connection: The PCIe PCB card connects to the motherboard using the original communication cables that come with the server, eliminating the need for PCIe extender cables, retimers, or switches. This is a crucial advantage, as extender cables can disrupt the PCIe bus impedance, potentially causing the system to downgrade to lower PCIe versions (such as 3.0 or even 1.0), resulting in significant performance loss.

- Custom frame solution: We’ll be using a custom frame built with elements used commonly in robotics components from GoBilda to securely hold the top 4 external GPUs. This enables eight 3-slot GPUs to fit in this server setup with the original PCIe5.0 cards and cables, without the need of PCIe redrivers or PCIe cable extensions.

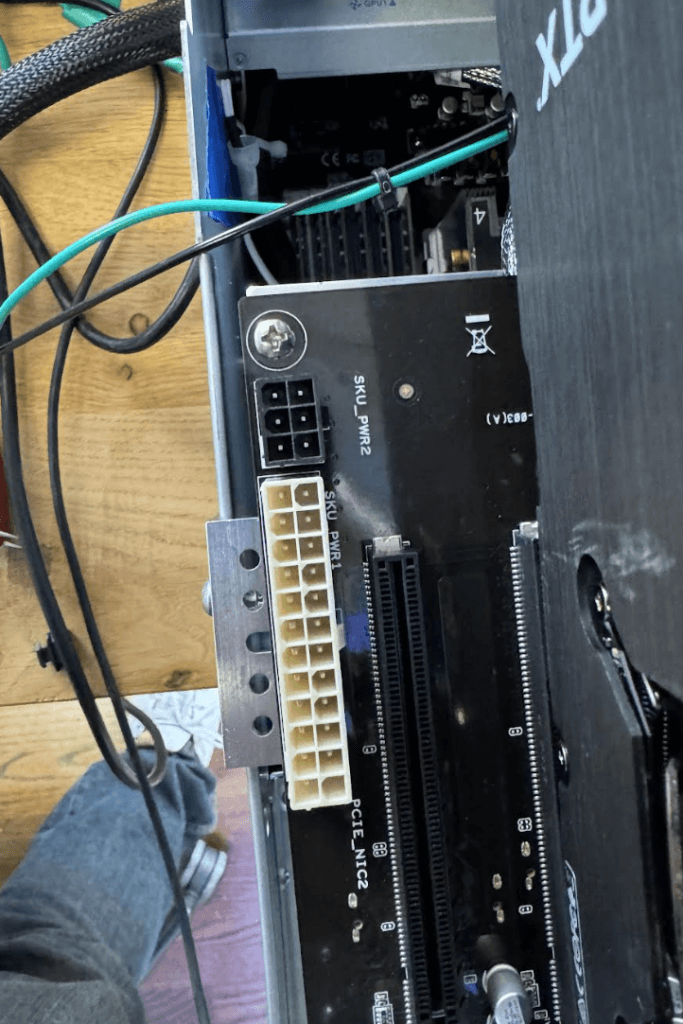

- Simple power distribution: Power is distributed to both PCIe boards using an ATX 24-Pin and a 6-pin motherboard power extension cables, an ATX 24-pin Y splitter and a 6-pin Y splitter.

- High-performance infrastructure: We operate our GPU setup on a 220V power supply and utilize a symmetrical 10G single-mode fiber internet connection.

Server specifications

Before we begin the build process, let’s review the key components and specifications of our GPU server:

- Server model: ASUS ESC8000A-E12P

- GPUs: 8x NVIDIA RTX 4090

- CPU: 2x AMD EPYC 9254 Processor (24-core, 2.90GHz, 128MB Cache)

- RAM: 24x 16GB PC5-38400 4800MHz DDR5 ECC RDIMM (384GB total)

- Storage: 1.92TB Micron 7450 PRO Series M.2 PCIe 4.0 x4 NVMe SSD (110mm)

- Operating system: Ubuntu Linux 22.04 LTS Server Edition (64-bit)

- Networking: 2 x 10GbE LAN ports (RJ45, X710-AT2), one utilized at 10Gb

- Additional PCIe 5.0 card: ASUS 90SC0M60-M0XBN0

Build process

Next, let’s walk through the step-by-step process of assembling our high-performance GPU server.

Step 1: Prepare the Server Chassis

- Start with the ASUS ESC8000A-E12P server chassis.

- Remove the top cover and any unnecessary internal components to make room for our custom configuration.

Step 2: Install RAM

- Install the 24x 16GB DDR5 ECC RDIMM modules into the appropriate slots on the motherboard.

- Ensure they are properly seated and locked in place.

Step 3: Install storage

- Locate the M.2 slot on the motherboard.

- Install the 1.92TB Micron 7450 PRO Series M.2 PCIe 4.0 NVMe SSD.

Step 4: Prepare the PCIe boards

- Install the ASUS 90SC0M60-M0XBN0 PCIe 5.0 additional card.

- Redirect four pairs of the cables (which are labeled with numbers) from the original PCIe card card that is already installed in the server (bottom PCIe card). We alternated the sequence: set 1 stays in the bottom PCIe card, set 2 goes to the top card, set 3 stays in the bottom PCIe card and so on.

Step 5: Create a “Y” splitter cable for ATX 24-pin and the 6-pin connectors

- Create “Y” splitter cable extensions to supply power to the external 90SC0M60-M0XBN0 PCIe 5.0 expansion card, which will be mounted on top of the server.

- Ensure that the “Y” splitter cable extensions are of the appropriate gauge to safely handle the power requirements of both the external PCIe card and the GPUs.

Step 6: Install lower GPUs

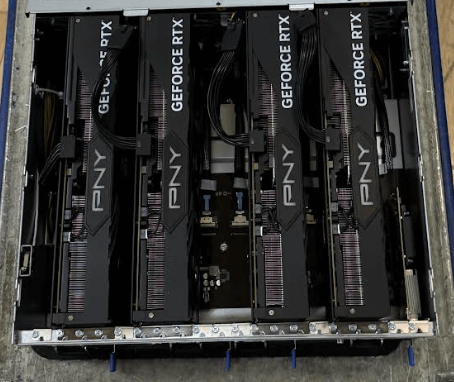

- Install four NVIDIA RTX 4090 GPUs into the lower PCIe slots on the original PCIe card located next to the motherboard.

- Ensure proper seating and secure them in place.

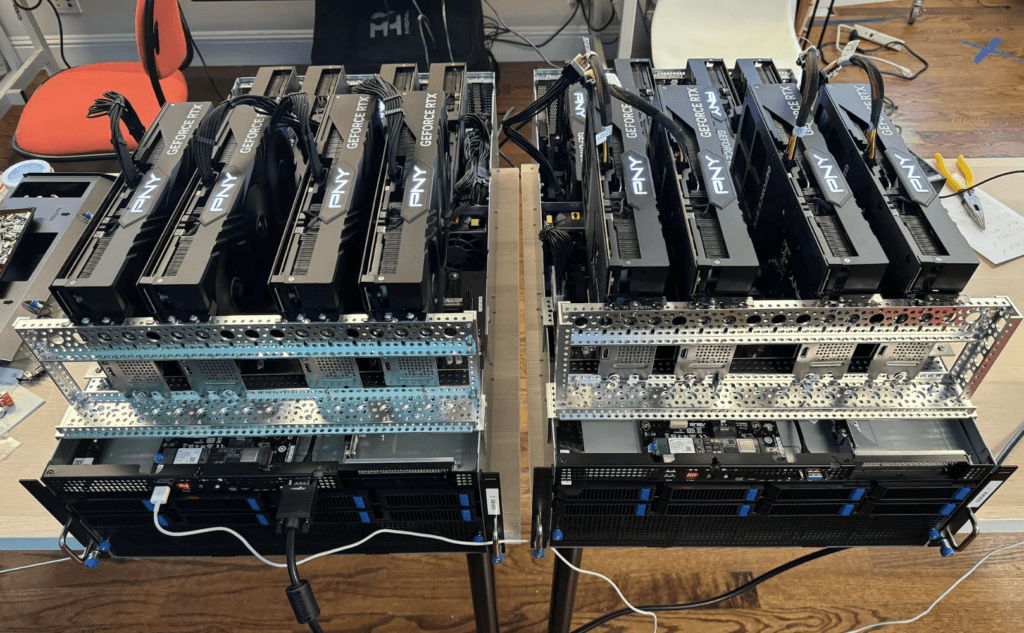

Step 7: Prepare custom frame for upper GPUs and install them

- We used a custom built frame using GoBilda components.

- Ensure the frame is sturdy and properly sized to hold four RTX 4090 GPUs.

- Ensure you are using power cables with the proper gauge to handle each GPU.

Step 8: Networking setup

- Identify the two 10GbE LAN ports (RJ45, X710-AT2) on the server.

- Connect one of the ports to your 10G single-mode fiber network interface.

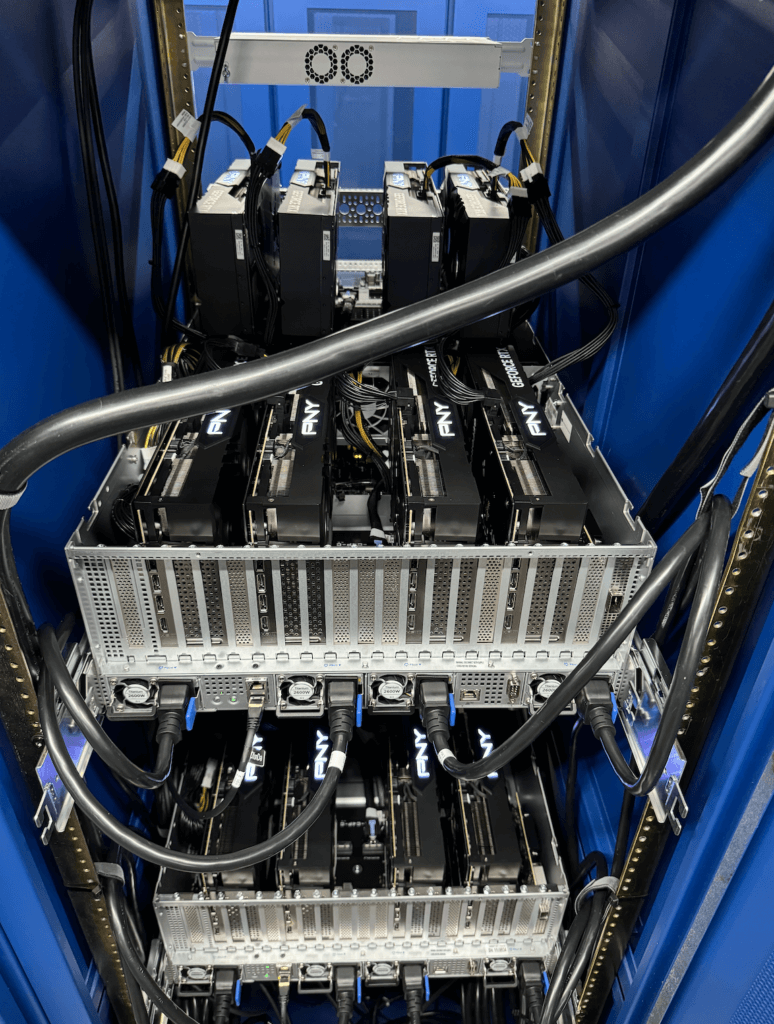

Step 9: Final assembly and cable management

- Double-check all connections and component placements.

- Implement proper cable management to ensure optimal airflow and thermal performance, like ensuring there’s enough space between servers.

Step 10: Operating system installation

- Create a bootable USB drive with Ubuntu Linux 22.04 LTS Server Edition (64-bit).

- Boot the server from the USB drive and follow the installation prompts.

- Once installed, update the system and install necessary drivers for the GPUs and other components.

Final build

Once you’ve completed all the steps, you should have a GPU server that looks like this and is ready to get to work!