When a platform shift reshapes the technology landscape, who wins?

From mainframes to PCs, desktop to mobile, unnetworked to internet, and on-prem to cloud and SaaS, technical progress in software tends to follow Schumpeter’s creative destruction: new winners emerge with new eras. As our partners Martin Casado and Sarah Wang recently explored, this dynamic is finally starting to play out in AI. After decades of AI winters, we are finally on the precipice of a true platform shift, one that will open even more value creation for AI companies. While much of the focus has been on new use cases driven by LLMs and generative AI, the value creation will go beyond generative AI to include non-generative use cases, especially around increasingly mature computer vision models.

As AI brings about a new era in computing, who will win the race to capture value? To explore that question, we looked back at the most recent platform shift—from on-prem computing to SaaS and cloud. We studied revenue generation in our sample of 262 public B2B software companies over the last 2 decades to understand the competitive dynamics that both played out between startups and incumbents* and determined value capture up and down the software stack, from infrastructure to applications.

Here, we share what we learned about the market dynamics from the SaaS era that are likely to manifest in the AI era, most notably that it is a positive sum game and the emergence of a new infrastructure layer may produce the biggest winners. We also unpack why the shift to AI is likely to be much bigger than the shift to SaaS, why it appears to be happening even faster than the shift to cloud, and some potential sources of defensibility unique to AI products.

TABLE OF CONTENTS

A positive-sum game with trillions up for grabs

TABLE OF CONTENTS

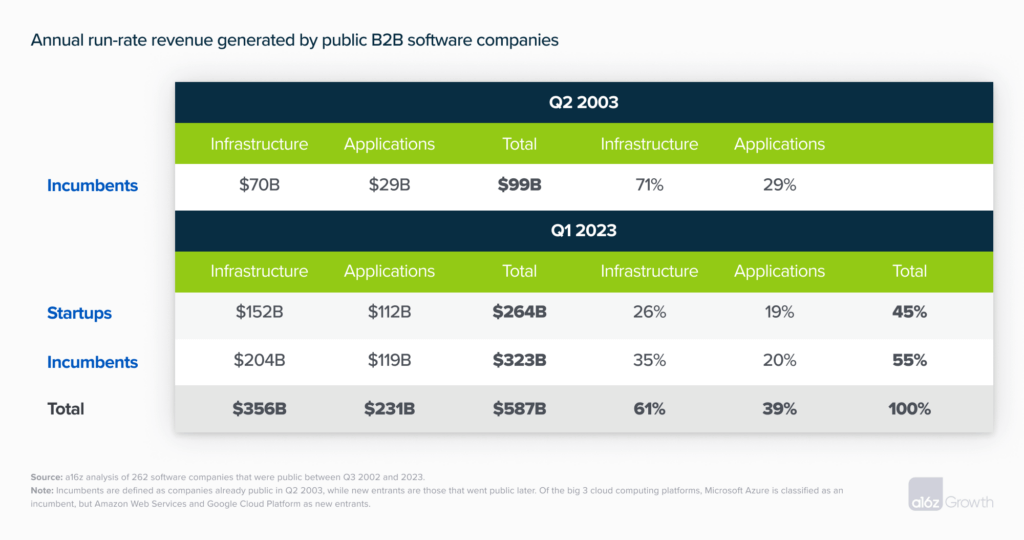

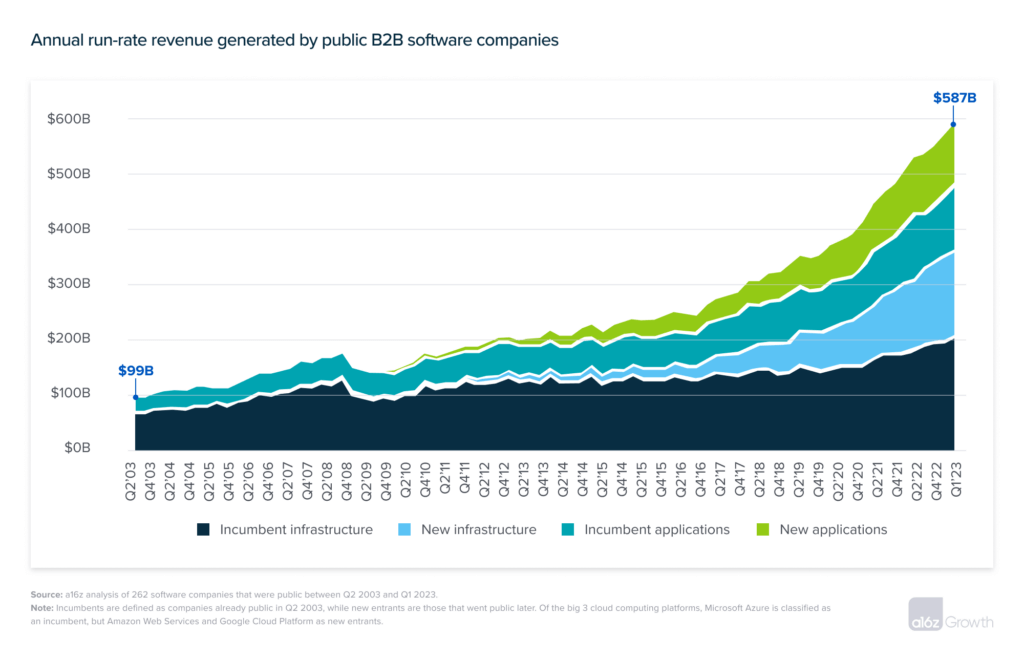

Despite popular David-versus-Goliath, zero-sum disruption narratives, platform shifts are usually positive-sum games. As technology advances, the size of the pie grows for both startups and incumbents. Since the Nasdaq bottomed out in 2003 Q2, aggregate revenue across public B2B software companies has grown from $99B to $587B, a 5.9x increase in new annual revenue generation produced by both incumbents and startups. Software incumbents have grown from generating $99B to $323B, maintaining 55% of market share. Or, put another way, incumbents have added revenue, but lost 45% of the market to new entrants. And 2 decades into SaaS, there’s still plenty of value left to capture—Morgan Stanley recently estimated cloud workloads to be only 29% penetrated in the enterprise.**  If this “business as usual” 5.9x rate of software expansion continues over the next 2 decades, public B2B software revenue will grow to $3T+. However, we consider $3T+ the lower bound of the opportunity ahead. While SaaS unlocked powerful network effects in collaboration software—such as Slack—that were otherwise not possible on-prem, most of the biggest winners at the applications layer have been cloud implementations of familiar on-prem products: Salesforce versus Siebel in CRM, Workday versus PeopleSoft in HRIS, ServiceNow versus BMC in ITSM, NetSuite versus SAP in ERP, Figma versus Adobe in design, and so on. AI is not just a business model and software delivery innovation—it’s a new way of tapping into, synthesizing, and advancing humanity’s collective knowledge and economic productivity. AI will thus create value akin to how the internet created value: by opening entirely new ways of doing things, not just better ways of doing old things.

If this “business as usual” 5.9x rate of software expansion continues over the next 2 decades, public B2B software revenue will grow to $3T+. However, we consider $3T+ the lower bound of the opportunity ahead. While SaaS unlocked powerful network effects in collaboration software—such as Slack—that were otherwise not possible on-prem, most of the biggest winners at the applications layer have been cloud implementations of familiar on-prem products: Salesforce versus Siebel in CRM, Workday versus PeopleSoft in HRIS, ServiceNow versus BMC in ITSM, NetSuite versus SAP in ERP, Figma versus Adobe in design, and so on. AI is not just a business model and software delivery innovation—it’s a new way of tapping into, synthesizing, and advancing humanity’s collective knowledge and economic productivity. AI will thus create value akin to how the internet created value: by opening entirely new ways of doing things, not just better ways of doing old things.

Broadening the scope beyond B2B software to include all consumer internet applications, from ridesharing to food delivery, data from the US Bureau of Economic Analysis estimates that cloud software grew the market size of the US digital economy from $1.0T in 2005 to $2.4T in 2021, a rend of 9.4% compound annual growth in software demand that we don’t anticipate slowing anytime soon. The ultimate value-add of AI software goes beyond the exact dollar figures captured in software budgets to all the economic activity it will support.

Market dynamics at the applications layer

While we can’t derive a natural law of software physics from a single platform shift, in the SaaS era, startups won more market share at the application than infrastructure layer. The overall software mix between cloud infrastructure and SaaS applications started at roughly 70/30 in 2003 and matured to 60/40 in 2023 Q1. Over that time, new entrants in our sample have captured roughly half ($112B) of the $231B of application dollars, but only 40% of the $356B infrastructure dollars.

Startup applications stealing more market share from incumbents than infrastructure companies is consistent with the common intuition that applications tend to be less defensible products. Maturity in developer tooling and core infrastructure off-the-shelf makes it easier to build new versions of familiar applications that steal customers from the prior generation. It’s much harder to convince customers to rip-and-replace core infrastructure, which creates a longer transition cycle from old to new infrastructure and buys incumbents more time to adapt.

Are incumbents adapting faster in the AI era?

SaaS–era incumbents faced a series of hurdles to adapt. They required new engineering talent to architect a cloud implementation of the legacy product, refreshed product development practices to continuously deploy software updates, and restructured sales organizations and comp models for subscription business models. Not surprisingly, many were slow to adapt. Siebel launched a cloud CRM product over 3 years after Salesforce launched, and PeopleSoft didn’t launch a cloud-hosted HRIS until over a decade after SuccessFactors came to market.

This time around, incumbents have ostensibly been faster to adapt and launch applications than they were in the SaaS era. ChatGPT launched in November 2022, and the vast majority of big SaaS incumbents have since shipped their own implementations of generative AI products, including Einstein GPT from Salesforce, Charlotte AI from Crowdstrike, ChatSpot from HubSpot, and Firefly from Adobe.

It is also important to note that while incumbents appear to be adapting faster, the shift to AI is also playing out faster than the shift to cloud and SaaS. It took ~5 years for SaaS apps to really get going once cloud infrastructure was in place. But in just 6 months, ChatGPT reached 300M users and launched plug-ins and APIs for developers to build on top of GPT. As a result, the talent and effort needed to ship an AI application are far less than what was needed to adapt an on-prem product for cloud—and it will only continue to get easier as the LLM ecosystem and off-the-shelf tooling further mature.

Given how easy it is to launch a ChatGPT-powered app, we view this flurry of activity more as incumbents merely stepping up to the plate, not evidence that they’ve cracked the innovator’s dilemma this time around. This first batch of solutions shipped by incumbents tends to fall into two buckets: 1) thin user interfaces that expose the legacy product in a chat-based format without delivering any new capabilities, or 2) products in categories that are already saturated due to indefensible use cases (e.g., producing marketing copy).

For SaaS incumbents, the real winners at the application layer are likely to be those who can figure out how to evolve a system of record into a system of prediction and, eventually, execution. Enterprise SaaS CRMs beat on-prem incumbents because they were more useful systems of records, with features that made it possible to better track and forecast sales. Now as incumbents, these SaaS era sales tools have to compete with AI sales intelligence products that, for instance, already have the ability to tell sales reps, “this is the next most promising customer to talk to, and here’s what to say,” or even replicate a convincing phone conversation directly with customers. While a handful of experienced engineers can figure out how to ship a thin LLM–powered UX on top of an old product, more meaningful innovation will require building entirely new engineering teams native to the technology.

When a platform shifts, a new infrastructure layer emerges

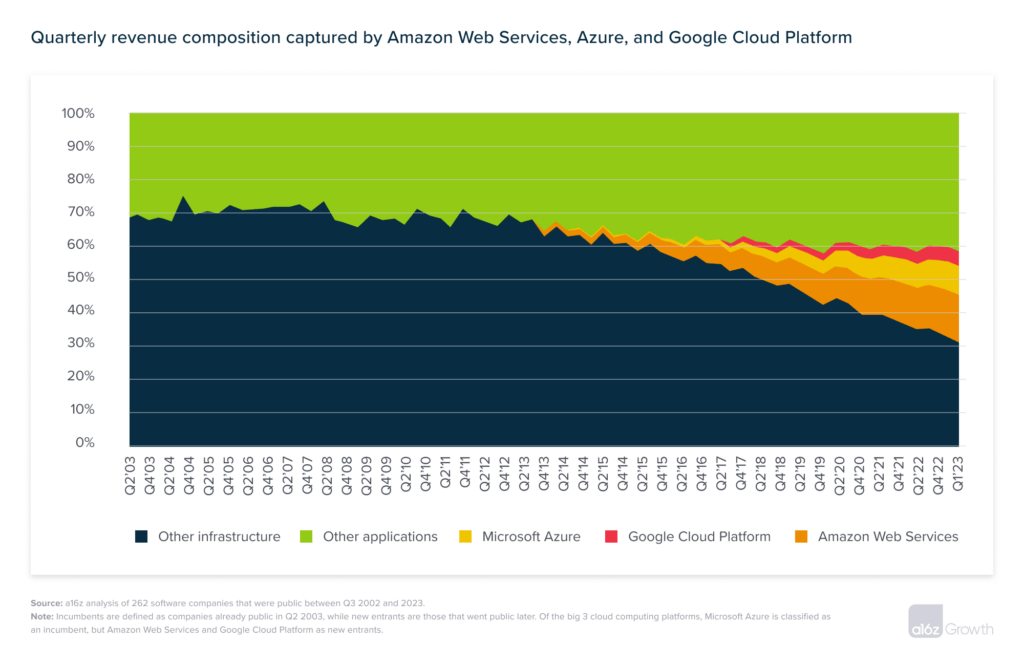

When infrastructure innovation does occur, such as with the rise of big cloud platforms, it creates new layers in the software stack and unlocks tremendous value. Amazon Web Services (launched in 2006), Microsoft Azure (2010), and Google Cloud Platform (2013) now account for $173B of run-rate revenue—30% of all public software revenue. With increasing economies of scale, the cloud computing platforms have come to dominate in the order in which they came to market, with AWS at 33%, Azure at 22%, and GCP at 10%.

Cloud becomes the incumbent

In the AI era, the same market forces are already emerging at the model layer, with OpenAI leading the pack in a competitive setup similar to the early years of AWS. This time around, AWS and the other big cloud players are the incumbents. They see AI as an extension of their legacy cloud compute business. Given the high compute demands of running AI models, the current chip shortages, and the capabilities these cloud providers have in providing their own chips or building out data center capacity on third-party chips, most have rolled out their own foundation models to compete at both the compute and model layers.

The nature of cloud computing also creates a symbiotic relationship between infrastructure and applications that will continue as AI infrastructure evolves. Core infrastructure unlocks new opportunities for applications, and scaling application volume increases demand for infrastructure, pulling infrastructure providers toward maturity faster.

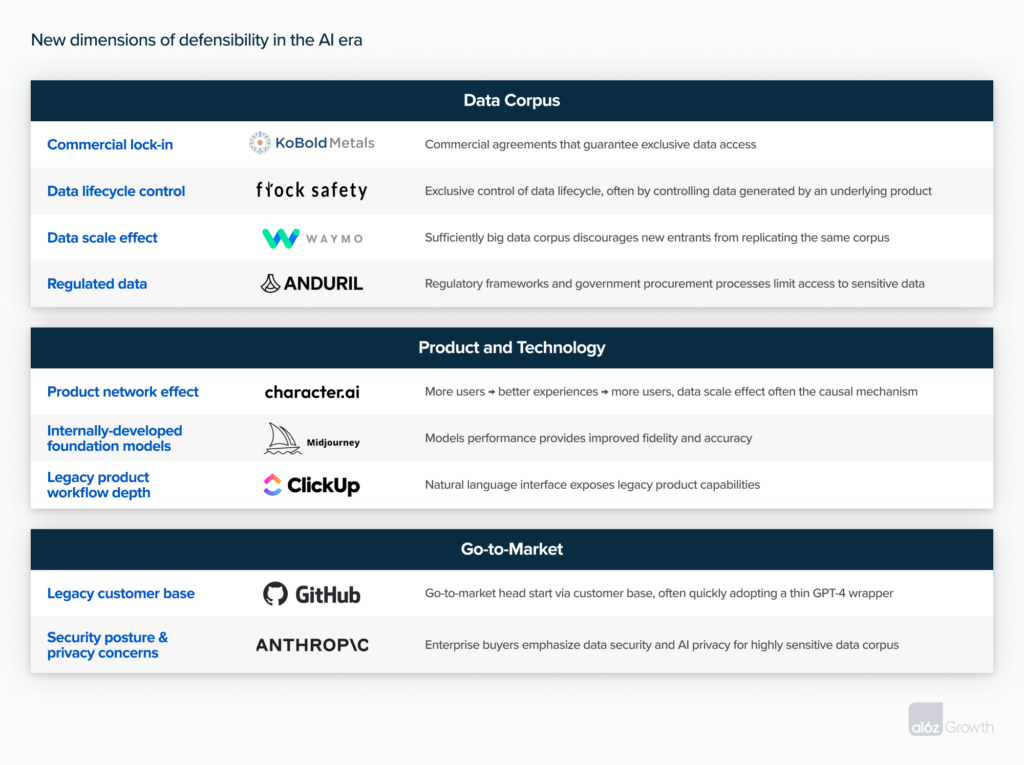

Defensibility ultimately determines long-term value capture

Where the value of AI accrues in the long run is ultimately a question of defensibility. In the SaaS era, the biggest sources of defensibility were typically hard-to-copy technology that won developer mindshare (e.g., Databricks), platform systems of record that served as the foundation for enterprise workflows and downstream applications (e.g., Salesforce), network effects that were embedded directly in the product experience (e.g., Slack), and go-to-market dominance unlocking a flywheel of customer feedback that informed product expansion (e.g., Workday).

We expect all these sources of defensibility to be just as important in the AI era. But the underlying technology behind AI products also introduces new potential competitive advantages. Our early-stage partners have written about value capture in generative AI platforms and continue to explore how defensibility will play out in the infrastructure of AI. Here, we share our early framework for assessing defensibility in growth-stage AI companies, when competitive advantages transition from early theories to the factors that govern long-term market winners and losers.

In 2019, we wrote about why we believe in many, if not most cases, data moats may be an empty promise. However, as more AI startups have matured, we have seen data provide a durable competitive advantage when AI products depend on proprietary data or data scale as a crucial ingredient and key differentiator. For instance, precious metals exploration company KoBold Metals establishes commercial agreements with major mining companies for exclusive access to their historical records of various prospectivity sites, providing a competitive moat. As a defense startup, Anduril had to win over the right federal partners to even get access to sensitive data. Flock Safety has assembled the biggest computer vision data corpus for law enforcement and by providing camera hardware can control the data through the whole lifecycle, from capture through implementation. This unlocks a flywheel of more customers → more cameras → more data → better predictions → safer communities → more customers, etc.

While data moats tend to get the most attention in the AI defensibility debate, this latest cycle in generative AI also introduces other new potential vectors of defensibility. Character.AI has a product network effect such that, as users experience the product, that usage becomes training data that feeds into improving the product experience. Midjourney has focused on developing the most performant proprietary foundation model in order to create the best application-layer use case on top.

In the first inning of generative AI products, some potential sources of defensibility may favor SaaS–era incumbents. For instance, companies that got to scale in the SaaS era can use AI to add a relatively thin natural language user interface on top of mature workflow capabilities. In this case, incumbents can ship generative AI products that pack a punch right out of the gate, even if those capabilities aren’t actually new, but are just surfaced to users in a new way. Similarly, incumbents may have the edge when they can sell into legacy customer bases or position themselves around robust security or compliance reputations to win.

We think AI will completely reinvent the workflow and UI for software, as increasingly AI software acts as a system of prediction and execution, not just a system of record. The speed with which we already see this shift happening means that nimble companies who can attract AI talent and move fast with some distribution are well-positioned to win.

Consumers win in the long run

Regardless of where defensibility comes from and who ultimately captures market value, the consumer will ultimately be the biggest winner. A paper back in 2019 found that consumers value “free” products in shockingly big dollar terms, estimating a willingness to pay as high as $17.5K for search engines, $8.4K for email, and $1.2K for streaming services. Given all the interesting consumer applications already emerging—virtual therapists accessible 24/7, physicians informed by the entire corpus of medical knowledge, automated secretarial assistants that deal with all the monotonous details of daily life—the scale of consumer surplus to come from AI is sure to spur a wave of incredible innovation. And if software history tells us anything about innovation, it’s that great entrepreneurs will always find ways to build important, durable companies in each new technological era.

Footnotes

*We define “incumbents” as companies that were already publicly listed before the Nasdaq bottomed out in 2002 Q3, and “startups” as companies that went public afterwards.

**Morgan Stanley Research, 2Q23 CIO Survey